To address research questions 3+4, the project needs to analyse and to test future societal scenarios for context-specific technology production in AI. Modelling and simulation provide the appropriate methodologies for scenario analysis and appraisal of potential social futures. A suitable model should be capable of computationally representing the “AI FORA world”: in this world, heterogeneous societal organisations interact in innovation networks embedded in their specific socio-cultural context negotiating and co-producing AI social assessment technology. Simulation experiments then can be outlined to test the effects of behavioural change, changing environments, value change, and policy interventions. This requires an intelligent agent framework in agent-based modelling (ABM).

Using a companion modelling approach for stakeholder-driven participative modelling, AI FORA will build on and further develop the agent-based SKIN simulation platform for policy research, which models the complex network dynamics of heterogeneous actors involved in technological innovation. Existing policy modelling applications of SKIN include IT policy, and even simulation studies on Responsible Research and Innovation (RRI) governance in IT, which can be used as a starting point for the AI FORA application. In co-design with case study stakeholders, the new model AI FORA SKIN will computationally reproduce the six case studies as artificial innovation ecologies located in different societal value systems. Sensitivity analysis will investigate their commonalities and differences, i.e. the degree of context- and path dependency they display. For this modelling approach, the project can again rely on Hofstede’s cultural dimensions theory, which has been implemented in social simulation before.

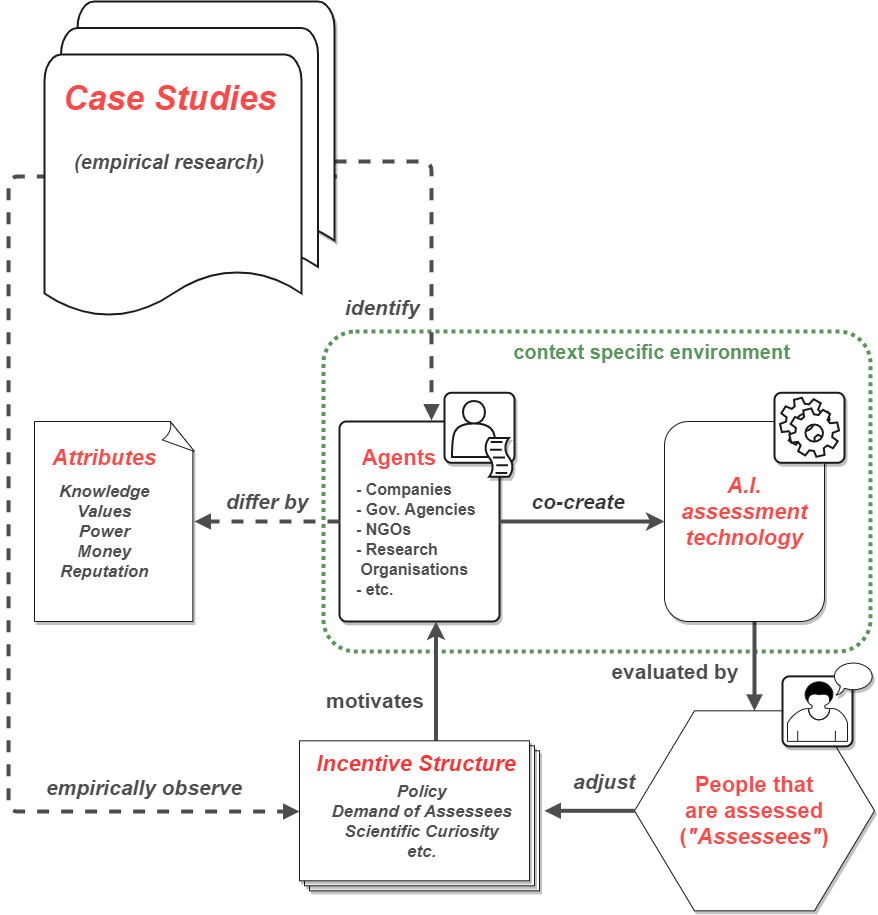

Agents in AI FORA SKIN can be private companies, government agencies, public service providers, NGOs, research organisations and other actors identified as relevant by empirical case study research. Agents differ by inter- or transdisciplinary knowledge backgrounds, value attitudes, and resources such as power, money, reputation etc. The basic loop of the model will incentivise agents by policy, user demand, scientific curiosity or other incentive structures empirically observed in case studies to envisage, negotiate, co-create and co-produce new AI social assessment technology. Agents are enabled and constrained by context-specific institutional environments and political, economic or regulatory frameworks. Performance of AI-based social assessment is evaluated by users and societal observers (e.g. public policy analysts, assessed individuals, service-supplying organisations, media and law etc.) with feedback to the agents’ technology production process, which results in new measures of incentivising agents starting the loop process again.